Introduction

OTT and online media content become more and more widespread among people around the globe. The way users access the media and entertainment information can be translated into meaningful insights that can further help companies develop and fine-tune online services and business strategies.

Together with modern technologies in big data storage and processing, this untouched field of user-generated data analytics should be investigated and efficiently used. By harnessing this information, businesses can predict problems even before they occur and prevent the harm to the customers.

With resources reallocated to the points where they are required at the moment, it is possible to define pros and cons of the existing development strategy and make significant service improvements. Visibility helps control the service and make right decisions to increase business value.

Making a general overview of data analysis, we can distinguish two major approaches — batch analytics and real-time analytics. Batch analytics can be applied to static data that is already generated, aggregated and stored. Real-time analytics handles the data that constantly updates, processing it as soon as it is available.

It is important to emphasize yet another difference between the approaches. Within batch analysis approach, data gets stored and processed offline to further provide backward-looking overview of what has already happened. Real-time analysis delivers a forward-looking overview of events, their drivers and consequences. This helps figure out what can actually happen in the future, making more reasoned and beneficial decisions.

When choosing the best option for an online business, it is necessary to consider the capabilities of both approaches, their application areas, as well as their potential to keep up with the growth in data production and consumption. Together with an increase in general data production, the share of streaming data will grow year by year.

At some point, batch learning will lose its efficiency, as it takes much time and requires specialized hardware. The only substitute will be stream analytics, due to its flexibility, timeliness and velocity. Today, some types of data already require complex processing to be stored for a further batch analysis, and the trend will grow even stronger in the future.

In this article, we would like to demonstrate how Oxagile uses its hands-on expertise to handle real-time streaming analytics.

Architecture Background

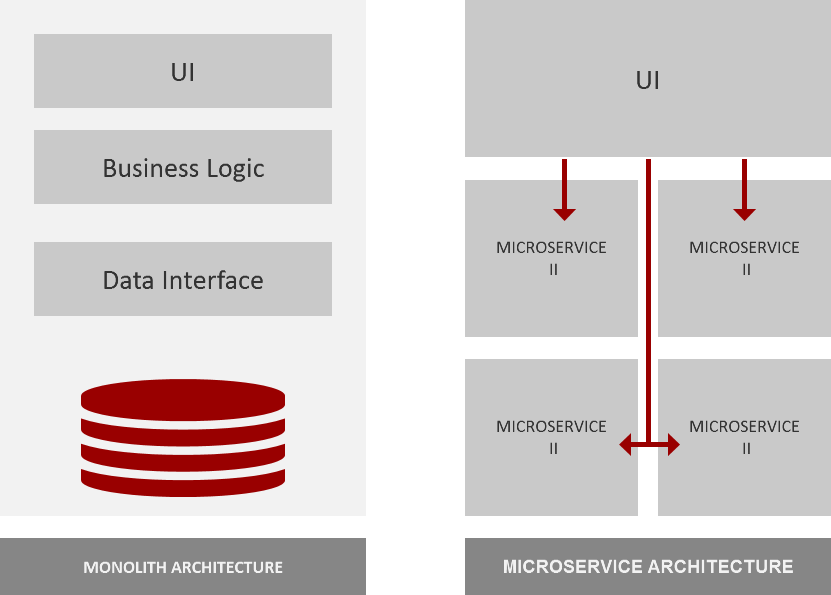

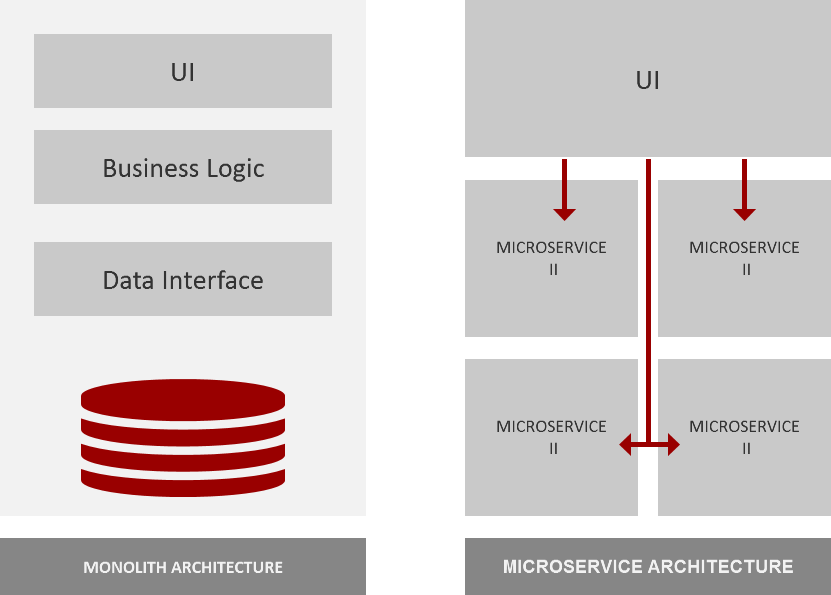

Over the last years, benefits of the systems that contain many isolated elements rather than a single piece have become obvious for developers and project managers. It is well-known that there are two major approaches to architecture development — monolith and microservices architecture. In brief, the main difference between them is their management and operations agility.

In monolithic systems, all software components are strictly interconnected, the architecture is solid and shows poor scaleability and flexibility. In contrast, microservice-based architectures consist of many isolated and independent elements that are connected by a messaging service. The idea behind is to make them interchangeable, scalable and crash-resistant.

An error in one element of microservice architecture will not cause a crash of the whole system and will not stop data processing. Moreover, it is possible to update a certain element without affecting the rest of the system, or add and delete elements without breaking the whole architecture.

On the other hand, monolithic systems show significant stability, as they aren’t affected by changes made by individual developers, as compared to microservice-based architectures.

In general, both approaches can be perfectly suitable for business needs, since they have totally different capabilities and application areas. In this article, we don’t want to focus on comparing the pros and cons of these approaches. What we want to do is to show how beneficial the microservice architecture can be for the purpose of real-time data processing.

Implementation

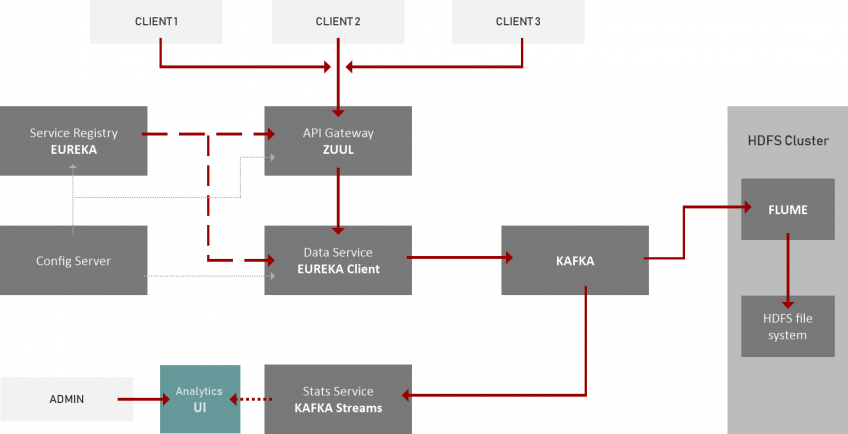

In order to create a representative sample of a system, it is reasonable to use Netflix OSS microservices, which are believed to be effective, simple in implementation, and able to quickly connect to external services and form a functional system for big data processing and record analysis.

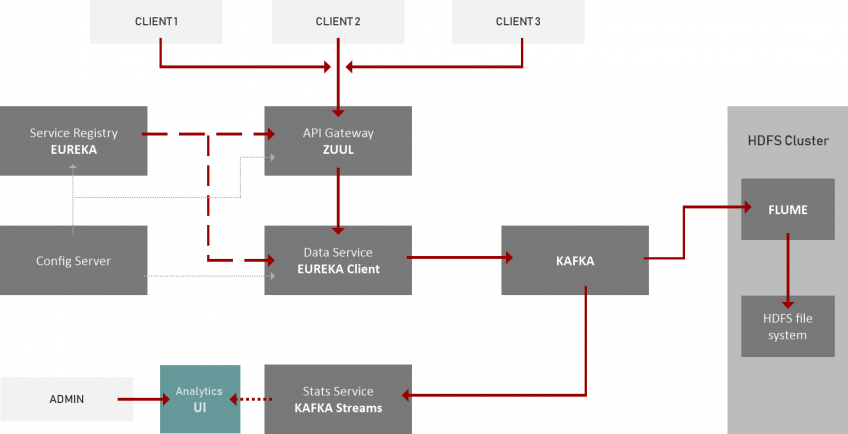

In our case, we take basic and the most popular microservices like Zuul and Eureka that help create system infrastructure and ensure communication between architecture elements. Eureka microservice is used to register other architecture components that are supported by configuration servers where all configurations and settings of the system’s elements are kept.

Zuul microservice is a gateway that’s used to provide routing, monitoring, security, and load balancing functions. It routes requests to specific services further into the system. Once a call passes Zuul API, it goes to a customized data service that receives user-generated data and transfers it to the HDFS infrastructure.

HDFS runs on the file system cluster that can be represented by any system suitable for your business objectives (for example, the best known Hadoop works well with Apache Flume), Apache Flume that collects and transfers huge amounts of log data between queuing software and HDFS cluster, and Apache Kafka, a streaming platform that queues messages and records coming from the user and transfers them to HDFS clusters.

Apart from receiving and storing procedures, it is very important to focus on the main challenge of the task, the one of creating real-time analytics to detect trends and patterns in user activities. To do this, we implement an additional service that extracts statistics and meaningful insights on online user activity from the data that’s stored by topics in Kafka.

Based on Kafka Streams application, our Stats Service receives and aggregates the insights from Kafka,grouping and reallocating them in a way to provide a meaningful report. All this happens simultaneously with the new calls, and the statistics are generated in real time.

As a result, we obtain a flexible pipeline where elements can be easily excluded or added to the system in line with changes in requirements to business objectives. We can implement the service to track user activity across an OTT platform or website, collect the data, and efficiently store it in HDFS cluster for further usage.

On top of this, we can add analytics microservice to extract meaningful insights from our data in real time, provide new opportunities for business decision-making, and offer tech improvements. Furthermore, flexible microservice architecture enables us to increase the scale and complexity of analytics systems, improving them in line with new requests and challenges.

Building the major system mock-up upon microservices, developers have no need to update the whole system any time they change core functions like analytics.

Loading Test Results

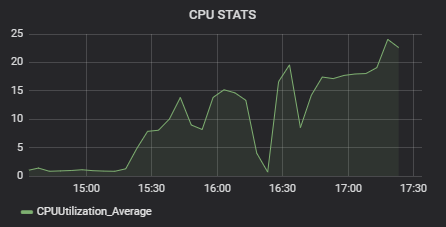

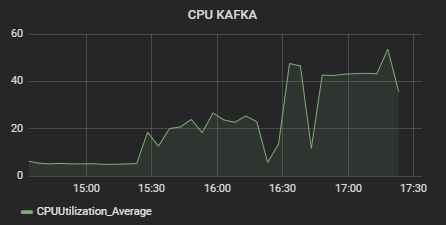

To test the architecture, we modeled a situation when 500,000 users simultaneously use an OTT service, switching channels once a minute. For a sample system with such architecture, the performance was quite impressive. Kafka showed 50% of CPU load, with 25% Statistics Service CPU load.

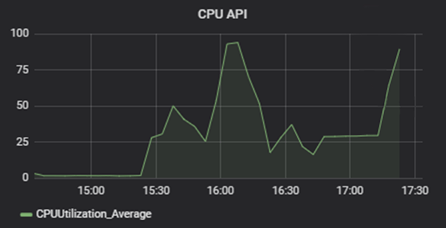

Tests were run on AWS T2 micro instances. The cluster of Zuul – Eureka – Config Server – Data Service was the most load-consuming, reaching up to 80% of CPU load, with the majority taken by the in-house crafted Data Service element.

Wrapping Up

To conclude, using microservices can be an effective and reasonable way to scale and optimize product development while achieving good performance. Their flexible and scalable structure allows to extend basic data receiving and storage functionality with additional components like data analytics tool.

With Kafka and Kafka Streams, the data analytics tool can easily analyze any sort of user activity data. In our case, the microservice-based system enabled us to define each user’s channel preferences and calculate the channel load. Due to Kafka’s capability of collecting any kind of user-generated data, the solution is flexible, and the reports can be configured and tailored to specific business needs.

Hanna Sharkouskaya, R&D Manager